Tech Tuesday: How Genealogix Stores Your Data Without a Cloud Database

Deep dive into IndexedDB, encrypted .gnlx bundles, and managing the AI generation lifecycle.

Welcome to the first installment of Tech Tuesday!

When I first started building Genealogix, I had a strict, non-negotiable rule: zero cloud databases. To fulfill the mission of helping people stop renting their history, user data could not live on a centralized server where it could be data-mined or held behind a subscription paywall.

But building a rich, AI-powered web application without a backend database introduces some massive engineering hurdles. Today, I want to pull back the curtain and show you exactly how Genealogix handles data persistence, cloud syncing, and AI artifact storage entirely in the browser.

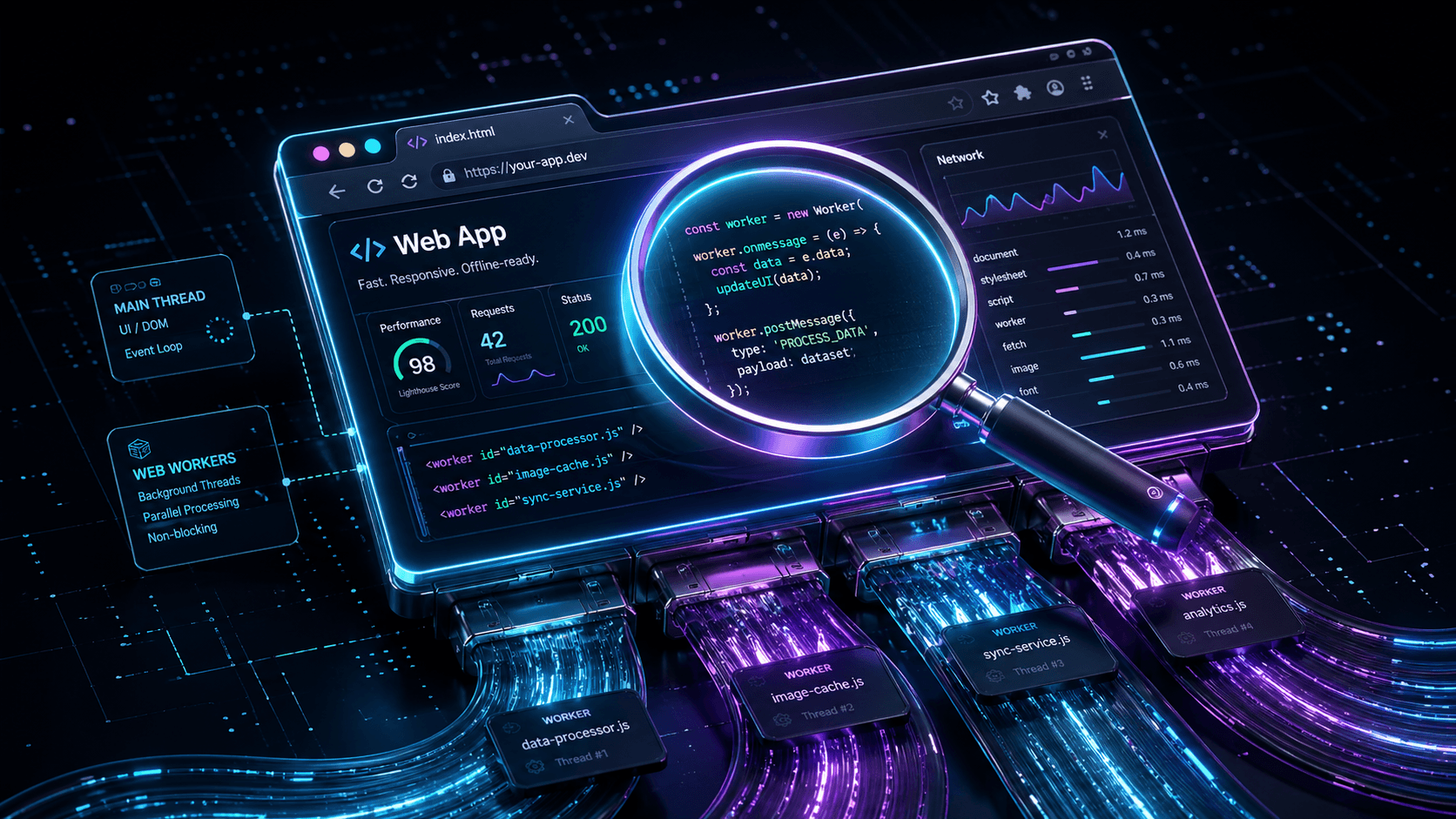

The Core Engine: IndexedDB & At-Rest Encryption

Because a serious genealogist's family tree can contain thousands of individuals and high-resolution documents, standard localStorage (with its 5MB limit) wasn't going to cut it.

Genealogix relies heavily on IndexedDB. When you add a person or a family tie, it is written directly to your browser's local storage. However, browser storage isn't inherently secure from malicious extensions. To solve this, Genealogix implements a strict at-rest encryption pattern.

Before a transaction is ever opened to write a record to IndexedDB, the payload is serialized and encrypted. We store the indexing keys (like personId) in plain text so we can still run lightning-fast queries, but the actual content body is stored as an encrypted string.

The Sync Problem: Enter the .gnlx Bundle

If the data only lives in your laptop's browser, what happens when you want to look at your tree on your phone?

Instead of routing your data through a proprietary server to sync devices, I built a custom file format: the .gnlx bundle.

When you enable Cloud Sync, the app gathers your encrypted IndexedDB records, packages them into a compressed .gnlx JSON structure, and pushes that single file directly to your personal Google Drive. When you open Genealogix on your phone, it simply fetches that .gnlx file from your Drive, decrypts the diffs, and updates your mobile IndexedDB. You get all the seamless cross-device syncing of a modern web app, but you own the storage bucket.

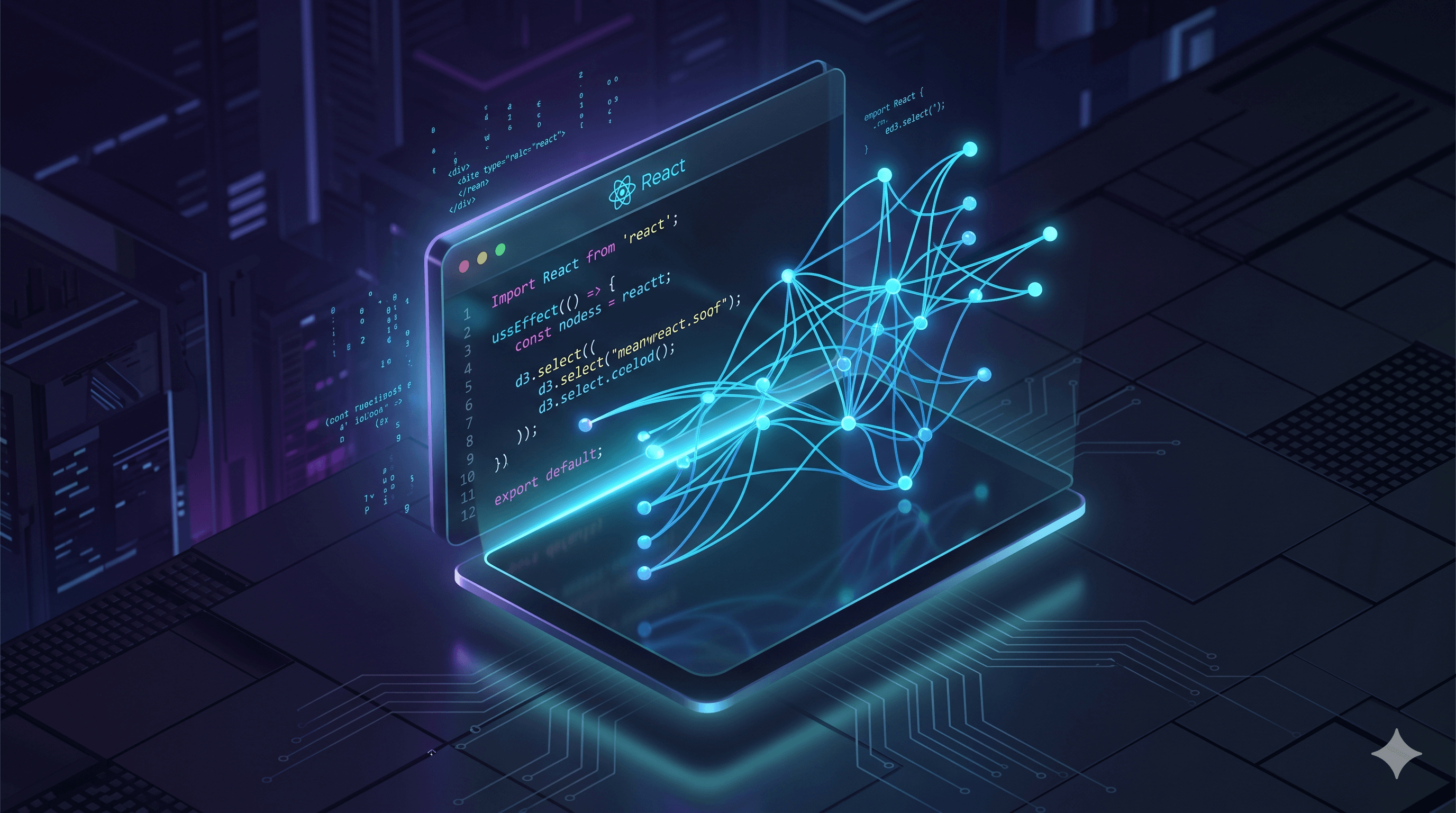

The AI Data Dilemma: Caches vs. Durable Artifacts

This week, I tackled one of the hardest architectural challenges of building an AI-native app: managing the lifecycle of generated content.

When our Research Assistant analyzes a brick wall, or the Tree-Aware Genie generates a Life Story, that requires an expensive API call. Initially, I just cached the results using the prompt hash. But caching is an implementation detail—it has a Time-To-Live (TTL) and gets cleared. Users were losing AI-generated recipes and translated narratives that they considered part of their permanent archive.

💡 The Trap: Why not just save AI outputs into the standard notes or memories fields? Because it creates a catastrophic AI feedback loop! If AI-generated stories are saved as "evidence" in the notes, future AI prompts will consume those generations as facts, leading to cascading hallucinations.

The Solution: I just shipped a dedicated "aiArtifacts" storage layer. We explicitly separated the ephemeral cache (researchCache) from durable user data. Now, when the AI generates a Life Story, users have a dedicated "Save" button. This writes an explicit, encrypted "LifeStoryArtifact" to IndexedDB.

To handle data mutations (e.g., you save a Life Story, but later update the ancestor's birth year), the artifact stores a sourceHash of the person's data at the time of generation. When you load the person's profile, the app recalculates the current hash. If it doesn't match the artifact's sourceHash, the UI flags the saved story as stale, allowing the user to decide if they want to keep the old version or generate a new one.

Building for Ownership

Building a local-first application requires a lot more architectural overhead than just spinning up a standard PostgreSQL database on AWS. But when you see a user successfully translate a 19th-century document and save it locally without sacrificing their privacy, the extra engineering effort is completely worth it.

If you are building local-first apps or wrestling with AI data lifecycles, drop a comment below or connect with me. And if you want to see this architecture in action, try building your tree at Genealogix.ai.

Until next Tuesday, keep building!